Overview

The MoEDAL experiment (Monopoles and Exotics Detector at the LHC) is the seventh major experiment at CERN‘s Large Hadron Collider. It is designed to probe the LHC’s particle collisions for signs of Paul Dirac’s hypothesised magnetic monopole, as well as other highly ionising signs of new physics that the other LHC experiments cannot easily look for. In order to quickly obtain results from the LHC’s 13 TeV run, the MoEDAL Collaboration needed to setup and run millions of GEANT4-based simulations. Thanks to the infrastructure provided by GridPP, this was possible within a matter of days.

The MoEDAL experiment in the VeLo cavern at Interaction Point 8. Credit: The MoEDAL Collaboration/CERN 2016.

The problem

MoEDAL is a relatively new – and relatively small – experiment at the LHC. Housed at Interaction Point 8, it shares the experimental cavern with the LHCb experiment. Despite its size, it has a comprehensive physics programme and needs to perform full particle transport simulations of the detector subsystems and their surroundings in order to understand how monopoles (or other new physics that might show up through highly-ionising signatures) will interact with MoEDAL’s experimental apparatus. Different parameters – such as the monopole mass or magnetic charge – each require hundreds of thousands of events. Local batch systems used by collaboration members were struggling to cope with the scale of computing required for the latest 13 TeV dataset.

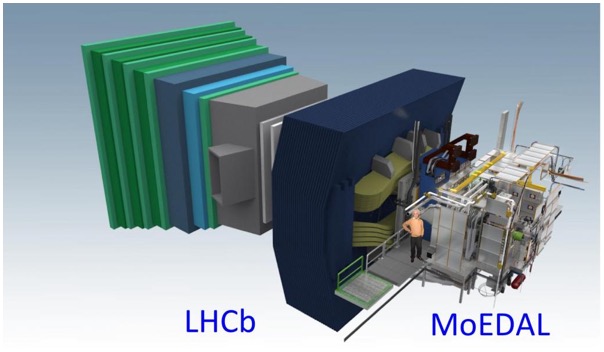

The MoEDAL and LHCb geometry models. Image credit: The MoEDAL Collaboration/CERN 2016.

The solution

Fortunately, GridPP were able to help. While technically an LHC experiment, the size of the MoEDAL Collaboration meant that the infrastructure provided by the New User Engagement Programme was ideal for quickly getting MoEDAL’s software group up and running on the Grid. Using the vo.moedal.org Virtual Organisation and a CVMFS software repository at CERN, the MoEDAL and LHCb software could be run on the Grid using GridPP DIRAC. Ganga provided a user-friendly interface for development and testing of the simulation workflow on a local batch system before switching to the GridPP DIRAC back-end. The scalability of the system, with Ganga able to manage hundreds of jobs at a time, meant that a wide range of parameters could be explored in parallel. In all 36 million events were simulated in a few days using GridPP resources, providing the collaboration with the results they needed for a demanding publication schedule.

What they said

Having worked with the Grid before, I knew that GridPP’s resources could deliver what we needed in the timescale we had to work with. The fantastic team behind GridPP DIRAC were able to configure our VO at supporting sites with ease and get us up and running with large-scale simulations fast. Ganga’s ability to switch between local and Grid running meant that the development and testing phases were made simpler too; by changing one configuration parameter we could switch from running a few local jobs to hundreds on the Grid with ease.

Dr Tom Whyntie, Software Coordinator, MoEDAL Collaboration/QMUL

Further details

Services used

- Ganga: Ganga was used for local and batch submission during development of the workflow, and then for job submission and management;

- DIRAC: The GridPP DIRAC instance was used for Grid job submission and management, via the Ganga interface;

- DIRAC File Catalog (DFC): The DFC was used for storage and management of the LHE simulation files (used as input to the simulation jobs) and output ROOT files containing the simulation results;

- CernVM: These provided a Grid-friendly system MoEDAL members to use when not using the CERN lxplus batch system, as CernVMs can be used for running Ganga and DIRAC via CVMFS;

- CernVM-FS: The MoEDAL CVMFS repository was used for deployment and management of the MoEDAL software and access to the LHCb, Ganga and DIRAC software.

Supporting GridPP sites

- University of Liverpool

- Queen Mary University of London

Virtual Organisation(s)

Publications

- The MoEDAL Collaboration, “Search for magnetic monopoles with the MoEDAL prototype trapping detector in 8 TeV proton-proton collisions at the LHC“, JHEP 2016 67 (2016)

Acknowledgements

This work was supported by the UK Science and Technology Facilities Council (STFC) via GridPP5 and grant number ST/N00101X/1.