Overview

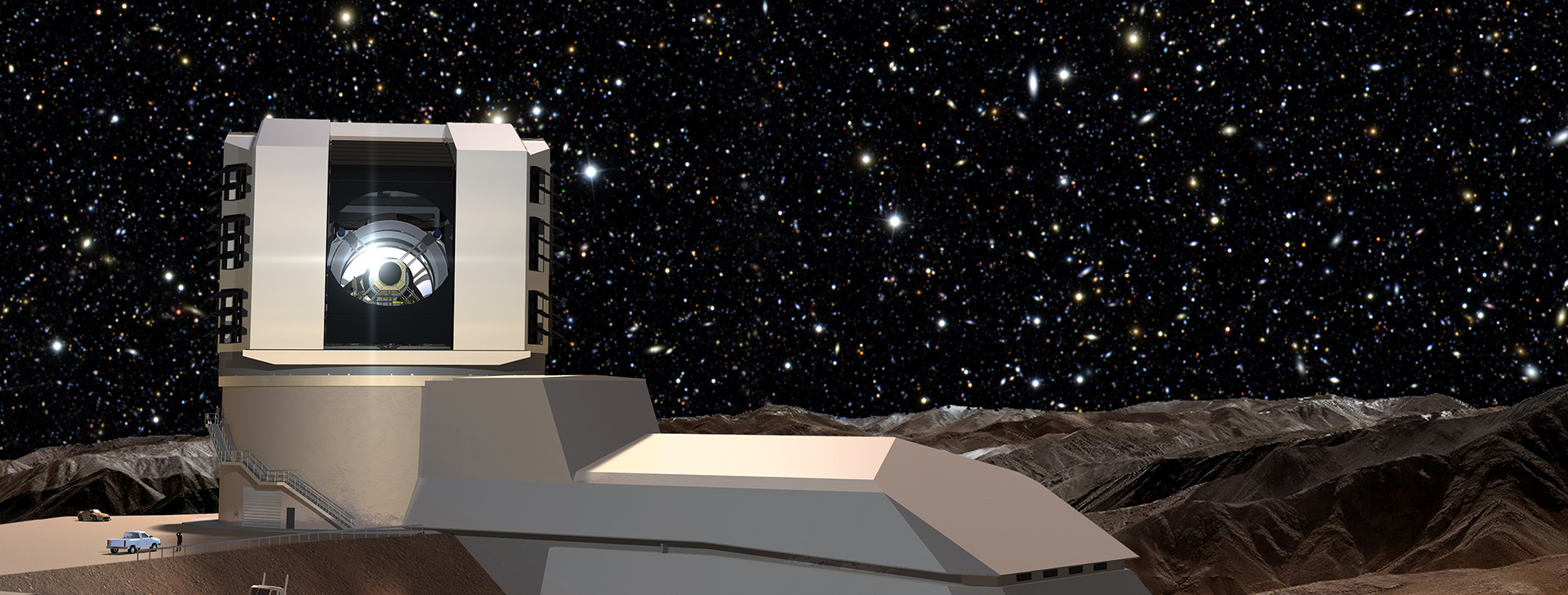

The Large Synoptic Survey Telescope (LSST) is an international physics facility being built at the Cerro Pachón ridge in north-central Chilé. It will use a three-billion-pixel camera to conduct a ten-year survey of the night sky in the visible electromagnetic spectrum. The resulting catalogue of images – an estimated 200 petabytes of data – will enable scientists to answer some of the most fundamental questions we have about our Universe. One such use of this data is being explored by researchers at the University of Manchester, who are studying the effect of dark matter and dark energy on the observed shape of galaxies. By examining how galaxies are distorted by an effect known as cosmic shear, insights can be gained into this missing ninety-five percent of everything we believe exists – the so-called Dark Universe.

An artist’s impression of the Large Synoptic Survey Telescope (LSST). LSST is under construction at the Cerro Pachón ridge in north-central Chilé, and is due to start scientific operations in 2023. Image credit: Mason Productions Inc./LSST Corporation.

The problem

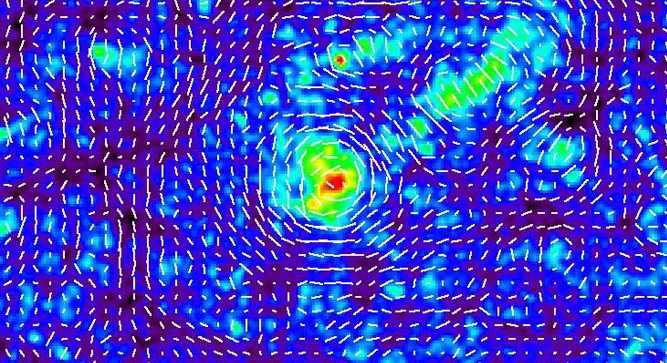

The LSST will produce images of galaxies in a wide variety of frequency bands of the visible electromagnetic spectrum, with each image giving different information about the galaxy’s nature and history. In times gone by, the measurements needed to determine properties like cosmic shear might have been done by hand, or at least with human-supervised computer processing. With the hundreds of thousands of galaxies expected to be observed by LSST, such approaches are unfeasible. Specialised image processing software (Zuntz 2013) has therefore been developed for use with galaxy images from telescopes like LSST and its predecessors. This can be used to produce maps like those shown in Figure 2. The challenge then becomes one of processing and managing the data for hundreds of thousands of galaxies and extracting scientific results required by LSST researchers and the wider astrophysics community.

A “whisker plot” showing the magnitude and direction of the cosmic shear (white tick-marks) caused by the mass distribution (coloured background) from a N-body astrophysical simulation. The degree of cosmic shear inferred from the analysis of LSST survey data can be used to estimate the effect of dark matter and dark energy in our Universe. Image credit: T. Hamana/arXiv.

The solution

As each galaxy is essentially independent of other galaxies in the catalogue, the image processing workflow itself is highly parallelisable. This makes it an ideal problem to tackle with the kind of High-Throughput Computing (HTP) resources and infrastructure offered by GridPP. In many ways, the data from CERN’s Large Hadron Collider particle collision events is like that produced by a digital camera (indeed, pixel-based detectors are used near the interaction points) – and GridPP regularly processes billions of such events as part of the Worldwide LHC Computing Grid (WLCG). You can see how the ATLAS experiment does this in the latter part of this video.

A pilot exercise, led by Joe Zuntz at the University of Manchester and supported by Senior System Administrator Alessandra Forti, saw the porting of the image analysis workflow to GridPP’s distributed computing infrastructure. Data from the Dark Energy Survey (DES) was used for the pilot. After transferring this data from the US to GridPP Storage Elements, and enabling the LSST Virtual Organisation on a number of GridPP Tier-2 sites, the IM3SHAPE analysis software package (Zuntz, 2013) was tested on local, grid-friendly client machines to ensure smooth running on the grid. Analysis jobs were then submitted and managed using the Ganga software suite, which is able to coordinate the thousands of individual analyses associated with each batch of galaxies. Initial runs were submitted using Ganga to local grid sites, but the pilot progressed to submission to multiple sites via the GridPP DIRAC (Distributed Infrastructure with Remote Agent Control) service. The flexibility of Ganga allows both types of submission, which made the transition from local to distributed running significantly easier.

By the end of pilot, Zuntz was able to run the image processing workflow on multiple GridPP sites, regularly submitting thousands of analysis jobs on DES images. He has since requested to continue using GridPP to support his scientific work.

What they said

“The pilot is considered to be a success. By the end of the exercise, analyses could be carried out significantly faster than was the case previously (for example, when using highly-contended US-based HPC resources). Thanks are due to the members of the GridPP community for their assistance and support throughout.”

Dr George Beckett, Project Manager, Uni. Edinburgh/LSST

Further details

Services used

- Virtual Organisations: multiple GridPP sites were configured to enable members of the pre-existing LSST VO to access computing and storage resources;

- Ganga: the Ganga software suite was used for grid job submission and management to both local sites (development) and the GridPP DIRAC service (production);

- The GridPP DIRAC service: once local job submission was running successfully, Ganga was configured to use the GridPP DIRAC service for multi-site workload and data management;

- Storage: multiple GridPP sites were configured for storing DES image data so that it could be used in GridPP DIRAC jobs. The GridPP DIRAC File Catalog (DFC) was used to catalogue and manage the datasets once transferred from the US-based network storage.

Supporting GridPP sites

UKI-LT2-BRUNEL, UKI-LT2-IC-HEP, UKI-LT2-QMUL, UKI-NORTHGRID-LANCS-HEP, UKI-NORTHGRID-LIV-HEP, UKI-NORTHGRID-MAN-HEP, UKI-SCOTGRID-ECDF, UKI-SOUTHGRID-OX-HEP, UKI-SOUTHGRID-RALPP.

Virtual Organisation(s)

Publications

- Kilbinger, M. (2015). “Cosmology with cosmic shear observations: a review“, Rep. Prog. Phys. 78 086901 http://dx.doi.org/10.1088/0034-4885/78/8/086901 (arXiv);

- Zuntz, J. (2013). “IM3SHAPE: a maximum likelihood galaxy shear measurement code for cosmic gravitational lensing“, MNRAS 434, 1604-1618 http://dx.doi.org/10.1093/mnras/stt1125.

Acknowledgements

In addition to the support provided by STFC for GridPP, this specific work was supported by the European Research Council in the form of a Starting Grant with number 240672.

Useful links

- The Large Synoptic Survey Telescope homepage;

- Read more about STFC’s research into dark matter and dark energy;

- Joe Zuntz’s research homepage;

IM3SHAPEin the Monthly Notices of the Royal Astronomical Society (MNRAS) (Zuntz, 2013) and on BitBucket;- The LSST Virtual Organisation’s entry in the EGI Operations Portal.