VacProject: VM lifecycle management

The Vac Project produces VM lifecycle managers that implement the vacuum model. Andrew's CHEP 2013 talk Running LHCb jobs in the Vacuum gives an overview of this model and the Vac implementation.

Vac applies the model to a group of autonomous worker node class machines. Vcycle reuses components of Vac to apply the vacuum model to manage VMs on IaaS Cloud services such as OpenStack.

The Vacuum Model

The vacuum model is an alternative to conventional Grid job submission and to centrally managed use of Infrastructure-as-a-Service clouds. Following the 2014 paper "Running jobs in the vacuum" (A McNab et al 2014 J. Phys.: Conf. Ser. 513 032065):

The Vacuum model can be defined as a scenario in which virtual machines are created and contextualized for experiments by the resource provider. The contextualization procedures are supplied in advance by the experiments and launch clients within the virtual machines to obtain work from the experiments' central queue of tasks.

For the experiments, VMs appear by "spontaneous production in the vacuum". Like virtual particles in the physical vacuum: they appear, potentially interact, and then disappear. There is no centrally-submitted pilot job, but instead the VM architecture includes some form of job agent fetching work from a central task queue as with pilot jobs.

For resource providers, this model gives them direct control over the shares allocated to each experiment.

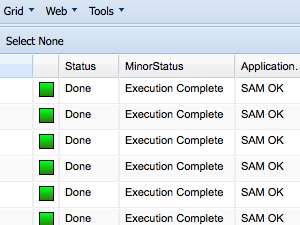

It is the role of the VM lifecycle manager to create the types of VMs specified by the experiments; to monitor their recent VM outcomes to discover which experiments have more or less work available; and to create new VMs in response to this and the resource provider's target shares.

In 2016 a set of interfaces were defined for the "Vacuum Platform" in HEP Software Foundation technical note HSF-TN-2016-04.

Vac

Vac implements this model at the level of the individual physical worker node class machine, which is referred to as a factory machine. Each factory acts autonomously using its target shares and configuration files policy, combined with information on current VM outcomes gathered from the other factories using the VacQuery UDP protocol. APEL accounting is implemented by direct reporting from each factory node to the central APEL service rather than using an intermediate service at the site. A Vac Puppet module is provided to install and configure Vac on each factory.

This model removes the need for the site to maintain a number of services required at grid sites, each of which can be a single point of failure. Consequently, Vac sites need

- no gatekeeper service (such as CREAM or ARC) to accept incoming pilot jobs

- no batch system headnode to centrally manage the pilot jobs on each worker node

- no site BDII to publish status

- no ARGUS server to manage pilot job access and gridmapdir mappings

- no site APEL service to buffer accounting information

Typically, running jobs in VMs at a Vac site only relies on a basic set of services (DNS, DHCP, Kickstart, Puppet) to install and configure factories, and the Vac daemon itself on each physical factory machine.

Pilot VMs

The simplest way to use Vac is with the "Pilot VM" architecture,

in which the agent deployed in grid pilot jobs is reused and run directly

within the VM as a result of the contexualization procedure. Pilot VMs based

on CernVM have been in production at Vac sites for LHCb since June 2013, for

GridPP DIRAC and ATLAS since May 2014, and for CMS from November 2014.

Details of each experiment's VMs are available in the sidebar on the left.

The same Pilot VMs can also be used on IaaS resources managed by Vcycle.

The simplest way to use Vac is with the "Pilot VM" architecture,

in which the agent deployed in grid pilot jobs is reused and run directly

within the VM as a result of the contexualization procedure. Pilot VMs based

on CernVM have been in production at Vac sites for LHCb since June 2013, for

GridPP DIRAC and ATLAS since May 2014, and for CMS from November 2014.

Details of each experiment's VMs are available in the sidebar on the left.

The same Pilot VMs can also be used on IaaS resources managed by Vcycle.

Documentation

vacd(8), vac.conf(5), vac(1), and check-vacd(8) man pages and the Admin Guide are available on this website and within the Vac distribution.

Downloads

Vac RPMs are available in the downloads area either by direct download or by using each minor version's YUM repo.

The source can be viewed at vacproject on GitHub.com.

Support

The support page has details of the vac-announce@cern.ch and vac-users@cern.ch mailing lists, and the GitHub and GGUS issue trackers for Vac and Vcycle.