Difference between revisions of "Tier1 Operations Report 2018-11-20"

From GridPP Wiki

(→) |

(→) |

||

| Line 13: | Line 13: | ||

* On Thursday 15th November, all non-LHC VOs were migrated to the new consolidated Castor tape instance. | * On Thursday 15th November, all non-LHC VOs were migrated to the new consolidated Castor tape instance. | ||

* CMS AAA issues are ongoing, although we may have finally turned a corner! It was found that certain CMS requests do not specify the Ceph pool to use (in this case it should be “cms”). There was no default pool set, thus the request fails. It was found that a bug meant that any subsequent requests would be sent to the (non-set) default pool causing all transfers to fail. This required a restart of the XRootD process. For the CMS AAA service we have added a default pool and monitoring of the logs, which will restart the process should it detect that transfers are using a non-set pool. SAM test results were at 96% over the weekend. | * CMS AAA issues are ongoing, although we may have finally turned a corner! It was found that certain CMS requests do not specify the Ceph pool to use (in this case it should be “cms”). There was no default pool set, thus the request fails. It was found that a bug meant that any subsequent requests would be sent to the (non-set) default pool causing all transfers to fail. This required a restart of the XRootD process. For the CMS AAA service we have added a default pool and monitoring of the logs, which will restart the process should it detect that transfers are using a non-set pool. SAM test results were at 96% over the weekend. | ||

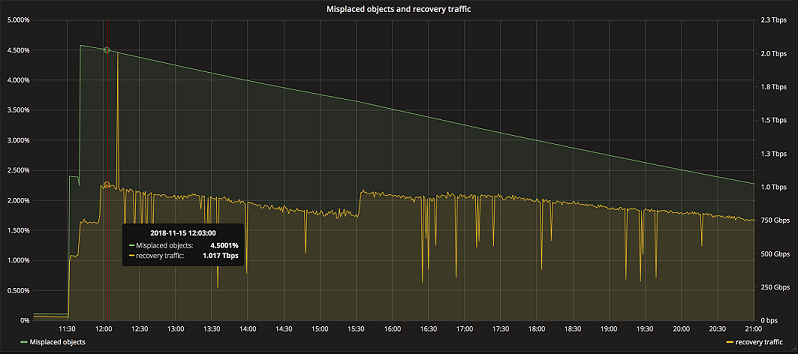

| − | * We have been experiencing issues with Ceph/Echo | + | * We have been experiencing issues with (Ceph/Echo), Cluster Vision 17 disk servers that were occasionally/randomly rebooting. This has now been resolved with a firmware patch that was rolled out last week. The hardware continues to be weighted up and we exceed 1Tb/s aggregated throughput on Friday 16th - graph below. |

| − | + | [[File:Echoplot.png]] | |

<!-- ***********End Review of Issues during last week*********** -----> | <!-- ***********End Review of Issues during last week*********** -----> | ||

<!-- *********************************************************** -----> | <!-- *********************************************************** -----> | ||

Revision as of 09:53, 20 November 2018

RAL Tier1 Operations Report for 20th November 2018

| Review of Issues during the week 13th November to the 20th November 2018. |

- On Thursday 15th November, all non-LHC VOs were migrated to the new consolidated Castor tape instance.

- CMS AAA issues are ongoing, although we may have finally turned a corner! It was found that certain CMS requests do not specify the Ceph pool to use (in this case it should be “cms”). There was no default pool set, thus the request fails. It was found that a bug meant that any subsequent requests would be sent to the (non-set) default pool causing all transfers to fail. This required a restart of the XRootD process. For the CMS AAA service we have added a default pool and monitoring of the logs, which will restart the process should it detect that transfers are using a non-set pool. SAM test results were at 96% over the weekend.

- We have been experiencing issues with (Ceph/Echo), Cluster Vision 17 disk servers that were occasionally/randomly rebooting. This has now been resolved with a firmware patch that was rolled out last week. The hardware continues to be weighted up and we exceed 1Tb/s aggregated throughput on Friday 16th - graph below.

| Current operational status and issues |

- NTR

| Resolved Castor Disk Server Issues |

| Machine | VO | DiskPool | dxtx | Comments |

|---|---|---|---|---|

| - | - | - | - | - |

| Ongoing Castor Disk Server Issues |

| Machine | VO | DiskPool | dxtx | Comments |

|---|---|---|---|---|

| gdss788 | WLCG | - | gridTape | d0t1 |

| Limits on concurrent batch system jobs. |

- None currently enforced.

| Notable Changes made since the last meeting. |

- NA62 were successfully migrated to the new consolidated Castor tape instance.

| Entries in GOC DB starting since the last report. |

| Service | ID | Scheduled? | Outage/At Risk | Start | End | Duration | Reason |

|---|---|---|---|---|---|---|---|

| - | - | - | - | - | - | - | - |

| Declared in the GOC DB |

| Service | ID | Scheduled? | Outage/At Risk | Start | End | Duration | Reason |

|---|---|---|---|---|---|---|---|

| CASTOR | 26325 | Yes | Outage | 15/11/2018 | 15/11/2018 | 3Hrs | Castor SRM endpoint migration |

| CASTOR | 26338 | Yes | Outage | 20/11/2018 | 20/11/2018 | 3Hrs | CASTOR out as part of Oracle Patch Instalation (Neptune and Pluto environments) |

-

No ongoing downtime -

No downtime scheduled in the GOCDB for next 2 weeks

| Advanced warning for other interventions |

| The following items are being discussed and are still to be formally scheduled and announced. |

Listing by category:

- DNS servers will be rolled out within the Tier1 network.

| Open

GGUS Tickets (Snapshot taken during morning of the meeting). |

| Request id | Affected vo | Status | Priority | Date of creation | Last update | Type of problem | Subject | Scope |

|---|---|---|---|---|---|---|---|---|

| 138218 | cms | in progress | urgent | 09/11/2018 | 12/11/2018 | CMS_Data Transfers | Transfers failing from RAL_Buffer to TIFR | WLCG |

| 138033 | atlas | in progress | urgent | 01/11/2018 | 08/11/2018 | Other | singularity jobs failing at RAL | EGI |

| 137897 | enmr.eu | waiting for reply | urgent | 23/10/2018 | 12/11/2018 | Accounting | enmr.eu accounting at RAL | EGI |

| 137822 | lhcb | in progress | top priority | 18/10/2018 | 31/10/2018 | File Transfer | FTS server seems in bad state. | WLCG |

| 137650 | cms | in progress | very urgent | 09/10/2018 | 12/11/2018 | CMS_AAA WAN Access | Low HC xrootd success rates at T1_UK_RAL | WLCG |

| 137153 | t2k.org | in progress | urgent | 12/09/2018 | 12/11/2018 | Data Management - generic | LFC entry has file size 0, preventsw registering of additional replicas | EGI |

| GGUS Tickets Closed Last week |

| Request id | Affected vo | Status | Priority | Date of creation | Last update | Type of problem | Subject | Scope |

|---|---|---|---|---|---|---|---|---|

| 138103 | cms | solved | urgent | 05/11/2018 | 07/11/2018 | CMS_Data Transfers | Transfers failing from RALPP to RAL | WLCG |

| 138077 | cms | solved | urgent | 02/11/2018 | 08/11/2018 | CMS_SAM tests | SAM test critical T1_UK_RAL | WLCG |

| 138028 | lhcb | verified | urgent | 01/11/2018 | 12/11/2018 | File Access | File cannot be staged | WLCG |

| 137752 | other | solved | less urgent | 15/10/2018 | 12/11/2018 | VO Specific Software | Replicate OSG CVMFS repositories to EGI stratum 1s | EGI |

| 136701 | lhcb | verified | very urgent | 14/08/2018 | 06/11/2018 | File Transfer | background of transfer errors | WLCG |

| 136199 | lhcb | verified | very urgent | 18/07/2018 | 06/11/2018 | File Transfer | Lots of submitted transfers on RAL FTS | WLCG |

|

Availability Report |

| Target Availability for each site is 97.0% | Red <90% | Orange <97% |

| Day | Atlas | Atlas-Echo | CMS | LHCB | Alice | OPS | Comments |

|---|---|---|---|---|---|---|---|

| 2018-11-07 | 100 | 100 | 99 | 100 | 100 | 100 | |

| 2018-11-08 | 100 | 100 | 100 | 100 | 100 | 100 | |

| 2018-11-09 | 100 | 100 | 100 | 100 | 100 | 100 | |

| 2018-11-10 | 100 | 100 | 100 | 100 | 100 | 100 | |

| 2018-11-11 | 100 | 100 | 100 | 100 | 100 | 100 | |

| 2018-11-12 | 100 | 100 | 100 | 100 | 100 | 100 | |

| 2018-11-13 | 100 | 100 | 87 | 100 | 100 | 100 |

| Hammercloud Test Report |

| Target Availability for each site is 97.0% | Red <90% | Orange <97% |

| Day | Atlas HC | CMS HC | Comment |

|---|---|---|---|

| 2018-11-06 | 98 | 100 | |

| 2018-11-07 | 100 | 99 | |

| 2018-11-08 | 100 | 99 | |

| 2018-11-09 | 100 | 98 | |

| 2018-11-10 | 100 | 96 | |

| 2018-11-11 | 100 | 92 | |

| 2018-11-12 | 100 | 99 | |

| 2018-11-13 | 100 | 99 |

Key: Atlas HC = Atlas HammerCloud (Queue RAL-LCG2_UCORE, Template 841); CMS HC = CMS HammerCloud

| Notes from Meeting. |